Models & Frontiers

Key Guides

The Inference Budget Just Got Interesting

OpenAI's o1 made headlines for "thinking harder" during inference. But the real story isn't that a model can spend more tokens on reasoning: it's that...

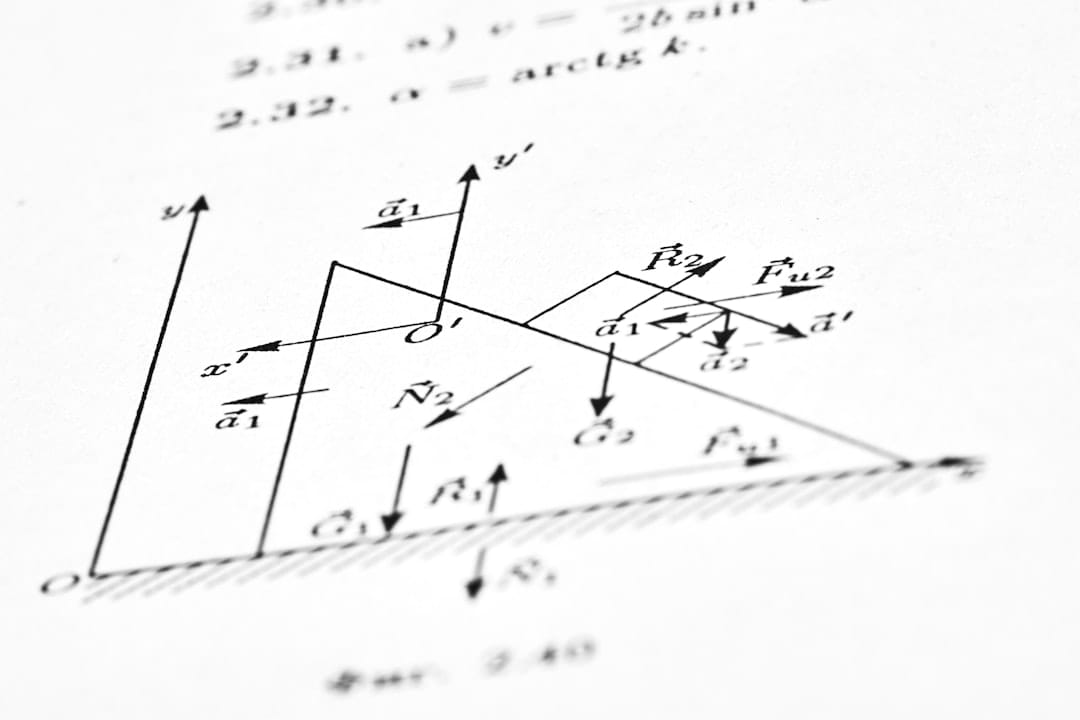

Mixture of Experts Explained: The Architecture Behind Every Frontier Model

Every frontier model released in the last 18 months uses Mixture of Experts. DeepSeek-V3 activates just 37 billion of its 671 billion parameters per token. Understanding how MoE works isn't optional anymore.

Inference-Time Compute Is Escaping the LLM Bubble

Explore how inference-time compute scaling lets AI models think longer and reason deeper, boosting accuracy without retraining.

China's Qwen Just Dethroned Meta's Llama as the World's Most Downloaded Open Model

The numbers don't lie. In 2025, Qwen became the most downloaded model series on Hugging Face, ending Meta's Llama reign as the default choice for open-sour

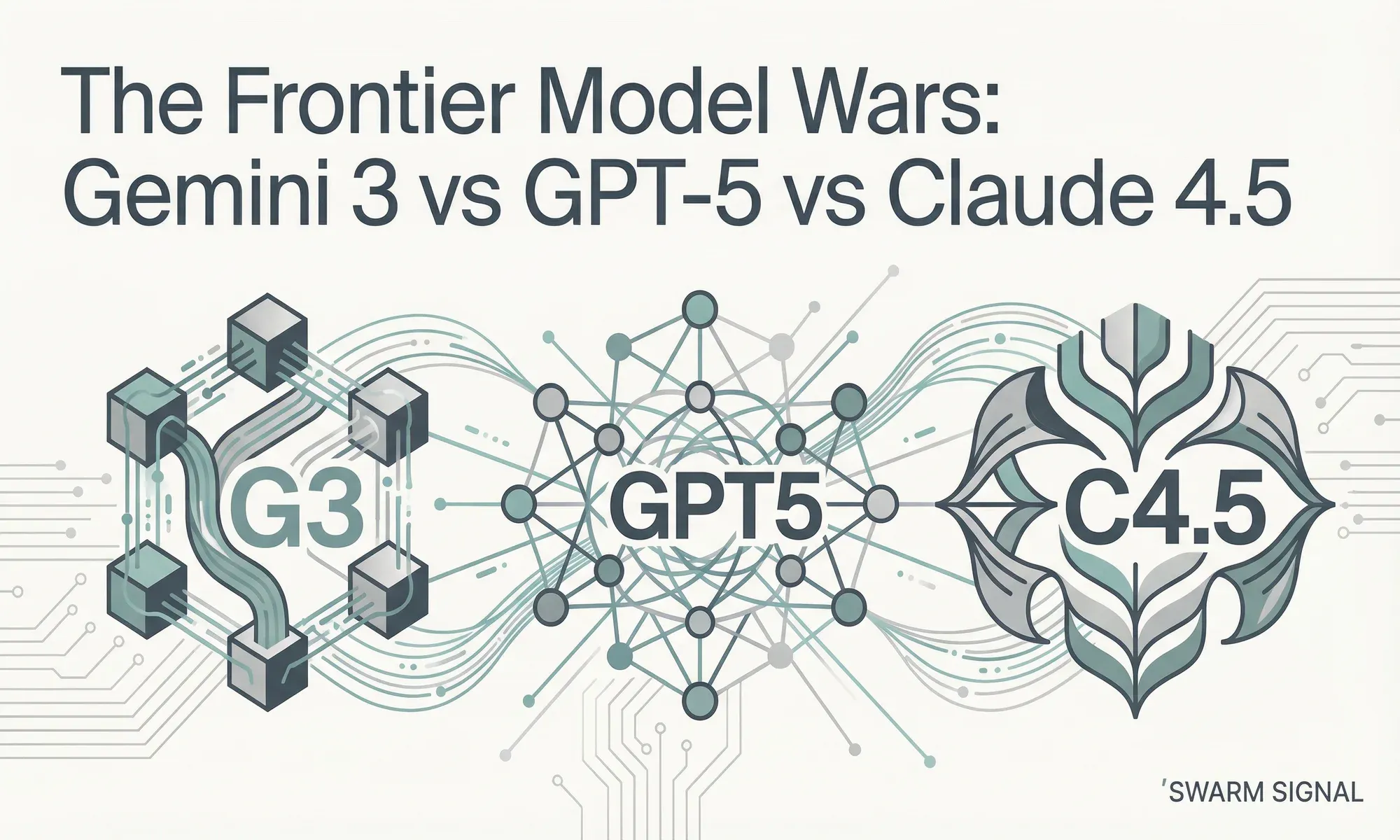

The Frontier Model Wars: Gemini 3 vs GPT-5 vs Claude 4.5

Google's Gemini 3 Pro scores 91.9% on GPQA Diamond, giving it nearly a 4-point lead over GPT-5.1's 88.1%. But Clarifai's model comparison shows Claude achi

2026 Is the Year of the Agent. Here's What the Data Actually Says

Every major cloud vendor and analyst firm agrees: 2026 is the year AI agents go from pilot to production. The data backs them up, but it also reveals the gap between adoption and outcomes is wider than anyone's admitting.

From Lab to Production: Why the Last Mile of AI Deployment Is Actually a Marathon

The models have never been better. The deployment rate has never been worse. What's actually breaking between 'it works in a notebook' and 'it runs in production.'

The Training Data Problem: Why What Models Learn From Matters More Than How Much

The AI industry's defining bottleneck has shifted from architecture and compute to something far less glamorous: the data itself.

When Models See and Speak: The Multimodal Agent Arrives

Multimodal agents are navigating websites, controlling robots, and generating 3D scenes. But perception is the bottleneck, and bridging it requires rethinking how models attend to the world.

Robots With Reasoning: When Language Models Meet the Physical World

A robot arm completing 84.9% of manipulation tasks without a single demonstration. Not through months of reinforcement learning: through pure language model reasoning. The line between software agents and physical robots is blurring.